Table of contents:

Converting CBU MRI DICOM data to BIDS format

To start working with your MRI data, you need to convert the raw DICOM format data to NIfTI format and organise them according to the BIDS standard. This tutorial outlines how to do that. If you have any questions, please email Dace Apšvalka. The example scripts described in this tutorial are available on our GitHub repository.

To perform the conversion on your CBU MRI data, you need to be logged into CBU Compute Cluster.

Where is your raw data

The raw data from the CBU MRI scanner are stored at /mridata/cbu/[subject code]_[project code]. You can see all your participant scan directories by typing a command like this in a terminal (replace 'MR09029' with your project code):

ls -d /mridata/cbu/*_MR09029/* /mridata/cbu/CBU090817_MR09029/20090803_083228/ /mridata/cbu/CBU090924_MR09029/20090824_095047/ /mridata/cbu/CBU090928_MR09029/20090824_164906/ /mridata/cbu/CBU090931_MR09029/20090825_095125/ ...

The first part of the folder name which follows the project code, is the data acquisition date. E.g., the data of the first subject in the example above was acquired on Aug-03-2009 ('20090803'). Each subject's folder contains data like this:

ls /mridata/cbu/CBU090817_MR09029/20090803_083228 Series_001_CBU_Localiser/ Series_002_CBU_MPRAGE/ Series_003_CBU_DWEPI_BOLD210/ Series_004_CBU_DWEPI_BOLD210/ Series_005_CBU_DWEPI_BOLD210/ Series_006_CBU_DWEPI_BOLD210/ Series_007_CBU_DWEPI_BOLD210/ Series_008_CBU_DWEPI_BOLD210/ Series_009_CBU_DWEPI_BOLD210/ Series_010_CBU_DWEPI_BOLD210/ Series_011_CBU_DWEPI_BOLD210/ Series_012_CBU_FieldMapping/ Series_013_CBU_FieldMapping/

Each Series### folder contains DICOM files of the particular scan. The name of the folder is the same as what a radiographer named the scan in the MRI console. Usually, the name is very indicative of what type of scan it is. In the example above, we acquired a T1w anatomical/structural scan (MPRAGE), nine functional scans (BOLD), and two field maps. The 'Series_001_CBU_Localiser' scan is a positional scan for the MRI and can be ignored.

Each of these folders contains DICOM (.dcm) files, typically, one file per slice for structural scans or one file per volume for functional scans. These files contain a header with the metadata (e.g., acquisition parameters) and the actual image itself. DICOM is a standard format for any medical image, not just the brain. To work with the brain images, we need to convert the DICOM files to NIfTI format which is a cross-platform and cross-software standard for brain images. Along with having the files in NIfTI format, we need to name and organise them according to BIDS standard.

Several DICOM-to-BIDS conversion tools exist (see a full list here). We recommend using HeuDiConv.

DICOM to BIDS using HeuDiConv

HeuDiConv is available on our computing system as an Apptainer image:

/imaging/local/software/singularity_images/heudiconv/heudiconv_latest.sif

HeuDiConv converts DICOM (.dcm) files to NIfTI format (.nii or .nii.gz), generates their corresponding metadata files, renames the files and organises them in folders following BIDS specification.

The final result of DICOM Series being converted into BIDS for our example subject above is this:

├── sub-01 │ ├── anat │ │ ├── sub-01_T1w.json │ │ └── sub-01_T1w.nii.gz │ ├── fmap │ │ ├── sub-01_acq-func_magnitude1.json │ │ ├── sub-01_acq-func_magnitude1.nii.gz │ │ ├── sub-01_acq-func_magnitude2.json │ │ ├── sub-01_acq-func_magnitude2.nii.gz │ │ ├── sub-01_acq-func_phasediff.json │ │ └── sub-01_acq-func_phasediff.nii.gz │ ├── func │ │ ├── sub-01_task-facerecognition_run-01_bold.json │ │ ├── sub-01_task-facerecognition_run-01_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-01_events.tsv │ │ ├── sub-01_task-facerecognition_run-02_bold.json │ │ ├── sub-01_task-facerecognition_run-02_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-02_events.tsv │ │ ├── sub-01_task-facerecognition_run-03_bold.json │ │ ├── sub-01_task-facerecognition_run-03_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-03_events.tsv │ │ ├── sub-01_task-facerecognition_run-04_bold.json │ │ ├── sub-01_task-facerecognition_run-04_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-04_events.tsv │ │ ├── sub-01_task-facerecognition_run-05_bold.json │ │ ├── sub-01_task-facerecognition_run-05_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-05_events.tsv │ │ ├── sub-01_task-facerecognition_run-06_bold.json │ │ ├── sub-01_task-facerecognition_run-06_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-06_events.tsv │ │ ├── sub-01_task-facerecognition_run-07_bold.json │ │ ├── sub-01_task-facerecognition_run-07_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-07_events.tsv │ │ ├── sub-01_task-facerecognition_run-08_bold.json │ │ ├── sub-01_task-facerecognition_run-08_bold.nii.gz │ │ ├── sub-01_task-facerecognition_run-08_events.tsv │ │ ├── sub-01_task-facerecognition_run-09_bold.json │ │ ├── sub-01_task-facerecognition_run-09_bold.nii.gz │ │ └── sub-01_task-facerecognition_run-09_events.tsv │ └── sub-01_scans.tsv

All files belonging to this subject are in the sub-01 folder. The structural image is stored in the anat subfolder, field maps in fmap, and functional images in the func subfolders. Each file is accompanied by its .json file that contains the metadata, such as acquisition parameters. For the functional images, in addition to the metadata files, an events file is generated for each functional run. The file names follow the BIDS specification.

HeuDiConv needs information on how to translate your specific DICOMs into BIDS. This information is provided in a heuristic file that the user creates. At the moment, at the CBU we don't use a standard for naming our raw scans in the MRI console. Therefore we don't have a standard heuristic (rules) that we could feed to HeuDiConv for any of our projects. You need to create this heuristic file yourself for your specific project. You can use existing examples as a guideline.

To create the heuristic file, you need to know what scans you have, which ones you want to convert (you don't have to convert all scans, only the ones you need for your project), and how to uniquely identify each scan based on its metadata.

As such, converting DICOM data to BIDS using HeuDiConv involves 3 main steps:

- Discovering what DICOM series (scans) there are in your data

- Creating a heuristic file specifying how to translate the DICOMs into BIDS

- Converting the data

Step 1: Discovering your scans

First, you need to know what scans there are and how to uniquely identify them by their metadata. You could look in each scan's DICOM file metadata manually yourself, but that's not very convenient. Instead, you can 'ask' HeuDiConv to do the scan discovery for you. If you run HeuDiConv without NIfTI conversion and heuristic, it will generate a DICOM info table with all scans and their metadata. Like this:

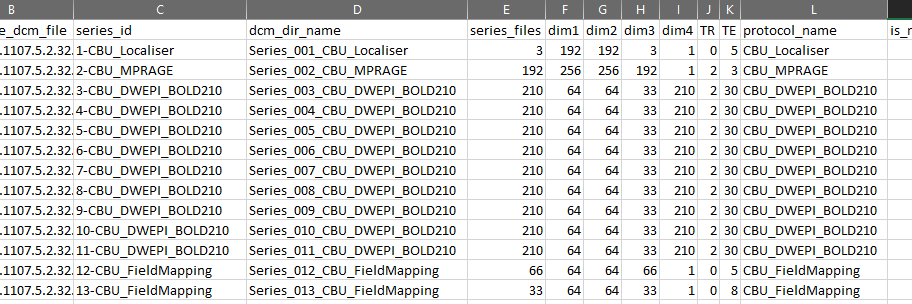

The column names are metadata fields and rows contain their corresponding values.

To get such a table, you'd write a simple bash script, like this dicom_discover.sh script, explained below. You can create and edit the script in any text editor. The file extension for bash scripts is .sh. I named my script dicom_discover.sh.

A bash script must always start with #!/bin/bash.

Specify your project's directory. Below I specified my path for this tutorial.

PROJECT_PATH='/imaging/correia/da05/wiki/BIDS_conversion/MRI'

Specify the raw data directory for any of your project's subjects, let's say the first one. We are assuming here that all subjects in your project have the same type of scans acquired and we can use a single heuristic file for the project.

RAW_PATH='/mridata/cbu/CBU090942_MR09029'

Specify where you want the output data to be saved. I specified the output to go in the 'work' directory. The 'work' directory, in my case, is a temporary directory where I want my intermediate files to go. I will delete this directory once I have finished data preprocessing.

OUTPUT_PATH=$PROJECT_PATH/data/work/dicom_discovery/

Specify this subject's ID. In this case, it can be any, as we will only use this subject to get the information about DICOMs in our project.

subject="01"

To use the HeuDiConv container, you first need to load the 'apptainer' module.

module load apptainer

Now, we can run the apptainer container with the following parameters:

--cleanenv: The HeuDiConv container contains all dependencies internally, it doesn't need any of your environment settings. Therefore, to avoid any potential conflicts with your environment, we specify --cleanenv parameter to ensure that nothing from your environment is passed to the HeuDiConv environment.

--bind: A container is a small, self-contained environment that runs applications. It does not have access to files or folders on your computer. The --bind option in the apptainer command is used to bind/mount files and directories from your system into the container. You must bind everything that the container needs access to. Here we mount our project directory and the directory where the raw DICOM files are so that the container can access them.

path to the container image: we specify which container we want to use. In this case the HeuDiConv container located on our imaging system. All parameters after this, are specific to HeuDiConv.

--files: We specify that all our DICOM files are located in the '/mridata/cbu/CBU090942_MR09029/[all subdirectories]/[all subdirectories]/[all files ending with .dcm]'

--outdir: Where to output the results.

--heuristic: In this case, we want to discover all scans, not applying any rules.

--subjects: Subject's ID how it will appear in the output.

--converter: In this case, we don't want to do the file conversion to NIfTI, we just want to discover what files we have.

--bids: Flag for output into BIDS structure.

--overwrite: Flag to overwrite existing files.

apptainer run --cleanenv \

--bind "${PROJECT_PATH},${RAW_PATH}" \

/imaging/local/software/singularity_images/heudiconv/heudiconv_latest.sif \

--files "${RAW_PATH}"/*/*/*.dcm \

--outdir "$OUTPUT_PATH" \

--heuristic convertall \

--subjects "${subject}" \

--converter none \

--bids \

--overwriteIt's a good practice to unload the module at the end.

module unload apptainer

Once you have created and saved your script, you can execute it. To do that, in a terminal, navigate to the directory where your script is located (I recommend putting your scripts in the [PROJECT_PATH]/code/ directory), e.g., in my case it would be:

cd /imaging/correia/da05/wiki/BIDS_conversion/MRI/code

And the run this command:

./dicom_discover.sh

If everything is working fine, you will see an output like this:

Loading apptainer version:

1.0.3

WARNING: Could not check for version updates: Connection to server could not be made

INFO: Running heudiconv version 0.11.6 latest Unknown

INFO: Analyzing 7145 dicoms

...

INFO: PROCESSING DONE: {'subject': '01', 'outdir': '/imaging/correia/da05/wiki/BIDS_conversion/MRI/data/work/dicom_discovery/', 'session': None}Once the processing has finished, the table that we are interested in will be located at OUTPUT_PATH/.heudiconv/[subject ID]/info/dicominfo.tsv. The .heudiconv directory is a hidden directory and you might not be able to see it in your file system. If so, either enable to view hidden files, or copy the dicominfo.tsv to some other location. For example, your home (U: drive) Desktop:

cp /imaging/correia/da05/wiki/BIDS_conversion/MRI/data/work/dicom_discovery/.heudiconv/01/info/dicominfo.tsv ~/Desktop

Now, you can open the file, for example, in MS Excel and keep it open for the next step - creating a heuristic file.

Step 2: Creating a heuristic file

The heuristic file must be a Python file. You can create and edit Python files in any text editor, but it would be more convenient to use a code editor or, even better, an integrated development environment (IDE), such as VS Code.

You can name the file anything you want. For example, bids_heuristic.py like I have named my file which is available here and explained below.

The only required function for the HeuDiConv heuristic file is infotodict. It is used to both define the conversion outputs and specify the criteria for associating scans with their respective outputs.

Conversion outputs are defined as keys with three elements:

- a template path

output types (valid types: nii, nii.gz, and dicom)

None - a historical artefact inside HeuDiConv needed for some functions

An example conversion key looks like this:

('sub-{subject}/func/sub-{subject}_task-taskname_run-{item}_bold', ('nii.gz',), None)

A function create_key is commonly defined inside the heuristic file to assist in creating the key, and to be used inside infotodict function.

def create_key(template, outtype=('nii.gz',), annotation_classes=None):

if template is None or not template:

raise ValueError('Template must be a valid format string')

return template, outtype, annotation_classesNext, we define the required infotodict function with a seqinfo as input.

The seqinfo is a record of DICOM's passed in by HeuDiConv and retrieved from your raw data path that you specify. Each item in seqinfo contains DICOM metadata that can be used to isolate the series, and assign it to a conversion key.

We start with specifying the conversion template for each DICOM series. The template can be anything you want. In this particular case, we want it to be in BIDS format. Therefore for each of our scan types, we need to consult BIDS specification. A good starting point is to look at the BIDS starter kit folders and filenames. (See the full BIDS specification for MRI here.)

Following these guidelines, we define the template (folder structure and filenames) for our anatomical, fieldmap, and functional scans. A couple of important points to note:

For the fielmaps, we add a key-value pair acq-func to specify that these fieldmaps are intended for the functional images - to correct the functional images for magnetic field distortions. You will see how this key-value is relevant later.

For the functional scans, you have to specify the task name. In my case, the task was Face Recognition. The task name must be a single string of letters without spaces, underscores, or dashes!

In this example project, we had multiple functional scans with identical parameters and task names. They correspond to multiple runs of our task. Therefore we add a run key and its value will be added automatically as a 2-digit integer (run-01, run-02 etc.).

Next, we create a dictionary info with our template names as keys and empty lists for their values. The values will be filled in in the next step with the for loop.

The for loop loops through all our DICOM series that are found in the RAW_PATH that we specify in our HeuDiConv apptainer container parameters. Here we need to specify the criteria for associating DICOM series with their respective outputs. Now you need to look at the dicominfo.tsv table that we generated in the previous step.

We only have one DICOM series with MPRAGE in its protocol name. Therefore for the anatomical scan, we don't need to specify any additional cireteria.

We have two fieldmaps. One of them should be a magnitude image and the other one a phase image. Both have FieldMapping in their protocol name therefore we need to define additional criteria to distinguish between them. They differ in the dim3 parameter. If in doubt, ask your radiographer, but typically, the magnitude image has more slices (higher dim3).

Our 9 functional scans are the only ones with more than one volume (dim4). They all are different runs of the same task, therefore we don't need any other distinguishing criteria for them, just the dim4. I specify here dim4 > 100 because it could happen that not all participants and not all runs had exactly 210 volumes collected. In addition, sometimes it happens that a run is cancelled due to some problem and I want to discard any run with less than 100 volumes.

def infotodict(seqinfo):

anat = create_key(

'sub-{subject}/anat/sub-{subject}_T1w'

)

fmap_mag = create_key(

'sub-{subject}/fmap/sub-{subject}_acq-func_magnitude'

)

fmap_phase = create_key(

'sub-{subject}/fmap/sub-{subject}_acq-func_phasediff'

)

func_task = create_key(

'sub-{subject}/func/sub-{subject}_task-facerecognition_run-{item:02d}_bold'

)

info = {

anat: [],

fmap_mag: [],

fmap_phase: [],

func_task: []

}

for s in seqinfo:

if "MPRAGE" in s.protocol_name:

info[anat].append(s.series_id)

if (s.dim3 == 66) and ('FieldMapping' in s.protocol_name):

info[fmap_mag].append(s.series_id)

if (s.dim3 == 33) and ('FieldMapping' in s.protocol_name):

info[fmap_phase].append(s.series_id)

if s.dim4 > 100:

info[func_task].append(s.series_id)

return infoFinally, we add POPULATE_INTENDED_FOR_OPTS dictionary to our heuristic file. This will automatically add an IntendedFor field in the fieldmap .json files telling for which images the fieldmaps should be used to correct for susceptibility distortion. Typically, these are the functional images. There are several options how you can specify for which images the fieldmaps are intended for. See full information here. In my example, I specify to look for a modality acquisition label. It checks for what modality (anat, func, dwi) each fieldmap is intended by checking the acq- label in the fieldmap filename and finding corresponding modalities. For example, acq-func will be matched with the func modality. That's why when defining the template for the fmap_mag and fmap_phase I added acq-func in their filenames.

POPULATE_INTENDED_FOR_OPTS = {

'matching_parameters': ['ModalityAcquisitionLabel'],

'criterion': 'Closest'

}Other, more advanced functions can be added to the heuristic file as well, such as input file filtering. For full information, see the documentation.

Step 3: Converting the data

Once our heuristic file is created, we are ready to convert our raw DICOM data to BIDS.

To do that, we need to make three changes in our dicom_discover.sh bash script that we wrote in the first step. I named the edited script `dicom_to_bids_single_subject.sh`.

We need to change the output path because we don't want to put this result in the work directory but in the final data directory

We need to change the --heuristic parameter specifying the path to our newly created heuristic file

We need to change the --converter parameter to dcm2niix because now we want the DICOM files to be converted to NIfTI format.

Once these changes are saved, you can run the script:

./dicom_to_bids_single_subject.sh

To convert other subjects as well, you'd need to change the raw path and subject ID accordingly. If you have multiple subjects, it's a good idea to process them all together on the CBU compute cluster using the scheduling system SLURM. See an example in the next session.

Converting multiple subjects in parallel

There are several ways you can submit compute jobs to SLURM to run parallel processing. I have created an example script on how to convert multiple subject raw data to BIDS using SLURM. The example script dicom_to_bids_multiple_subjects.sh is available on the GitHub repository.

Note that you must run this script slightly differently than before! Now you must put sbatch at the beginning of the script name to tell the job scheduler to run your script as a batch job:

sbatch dicom_to_bids.sh

The script is explained below.

In my example, I am converting three subjects. I already converted subject 01 in the previous step and now I will convert subjects 02, 03, and 04.

A bash script as always must start with #!/bin/bash.

Next, you need to specify SBATCH options. For the full list of possible options, see the SBATCH documentation. I am here specifying my job name, where the output and error logs should be saved, and, most importantly, the job array.

It is very useful to save the batch script's error and output logs for later inspection. Make sure that the directory where you specify the logs to be saved exists!

The --array` option specifies how many jobs need to be executed. In my case, for my 3 subjects, I need 3 jobs.

#SBATCH --job-name=heudiconv_%a #SBATCH --output=/imaging/correia/da05/wiki/BIDS_conversion/MRI/data/work/heudiconv_job_%A_%a.out #SBATCH --error=/imaging/correia/da05/wiki/BIDS_conversion/MRI/data/work/heudiconv_job_%A_%a.err #SBATCH --array=0-2 # Adjust the array range to match the number of subjects, in this case, 3 (indexed from 0)

Next, specify your other project-specific parameters.

# Your project's root directory

PROJECT_PATH="/imaging/correia/da05/wiki/BIDS_conversion/MRI"

# Location of the output data

OUTPUT_PATH=$PROJECT_PATH/data/

# Your MRI project code, to locate your data

PROJECT_CODE="MR09029"

# List of subject CBU codes as they appear in the /mridata/cbu/ folder

SUBJECT_CBU_CODES=(

"CBU090938" # Subject 02

"CBU090964" # Subject 03

"CBU090928" # Subject 04

)

# Create a list of subject IDs as they will appear in the BIDS dataset

SUBJECT_LIST=(02 03 04)

# Location of the heudiconv heuristic file

HEURISTIC_FILE="${PROJECT_PATH}/bids_heuristic.py"You don't have to change anything in the rest of the script. What the script does is the following:

- Creates a list of paths to the raw data for each subject.

RAW_PATH_LIST=()

for subject in "${SUBJECT_CBU_CODES[@]}"; do

SUBJECT_PATH="/mridata/cbu/${subject}_${PROJECT_CODE}"

RAW_PATH_LIST+=("$SUBJECT_PATH")

done

# The above loop is equivalent to:

# RAW_PATH_LIST=(

# '/mridata/cbu/CBU090938_MR09029'

# '/mridata/cbu/CBU090964_MR09029'

# '/mridata/cbu/CBU090928_MR09029'

# )- Checks if the specified heuristic file exists.

if [ ! -f "$HEURISTIC_FILE" ]; then

echo "Heuristic file not found: ${HEURISTIC_FILE}. Exiting..." >&2

exit 1

fi- Gets the subject ID for the current job/subject.

subject="${SUBJECT_LIST[$SLURM_ARRAY_TASK_ID]}"- Gets the path to the raw data for the current job/subject and checks if the path exists.

RAW_PATH="${RAW_PATH_LIST[$SLURM_ARRAY_TASK_ID]}"

# Check if the raw data path exists.

if [ ! -d "$RAW_PATH" ]; then

echo "Raw data path for ${cbu_code} not found. Exiting..." >&2

exit 1

fiAnd, finally, the script loads the apptainer module and runs the HeuDiConv the same way as when we converted a single subject. The only difference to note is that here I explicitly bound the heuristic file to the container. In my example, it is not really necessary as the heuristic file is located in my PROJECT_PATH that I bind to the container anyway. But somebody else using this script might have their heuristic file located somewhere else, for example, in some other project's directory if using the same heuristic across projects. This way the script is more flexible for reuse.

# Load the apptainer module

module load apptainer

# Run the container

apptainer run --cleanenv \

--bind "${PROJECT_PATH},${RAW_PATH},${HEURISTIC_FILE}" \

/imaging/local/software/singularity_images/heudiconv/heudiconv_latest.sif \

--files "${RAW_PATH}"/*/*/*.dcm \

--outdir "$OUTPUT_PATH" \

--heuristic "${HEURISTIC_FILE}" \

--subjects "${subject}" \

--converter dcm2niix \

--bids \

--overwriteRun the script with this command:

sbatch dicom_to_bids.sh

You must put sbatch at the beginning of the script name to tell the job scheduler to run your script as a batch job!

Once you have run the script, you can check the job scheduler if your jobs are running. The command for that is squeue -u [your ID], in my case squeue -u da05.

[da05@login-j05 MRI]$ squeue -u da05

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

3246338_0 Main heudicon da05 R 0:06 1 node-k08

3246338_1 Main heudicon da05 R 0:06 1 node-k08

3246338_2 Main heudicon da05 R 0:06 1 node-k08If you specified the error and output log locations, you can check them for any progress or what the error was in case the job didn't run successfully.

More use cases

Multi-band acquisition with `sbref`

For multi-band functional scans typically a single-band reference scan is acquired before the multi-band scan. Sometimes a functional run is cancelled due to some problem (false start, problem in the experimental code, problem with a participant etc.). In such a case you might not want to ignore the scan completely and not convert it to BIDS. For the functional image, in the heuristic file, we can specify to consider functional scans with, for example at least 100 volumes. A scan with fewer volumes would be ignored. In that case, we would also need to ignore this scan's reference image. To do that and ensure that the correct single-band reference image is matched with the correct multi-band functional image, we need to include a few additional lines in the heuristic file. See the problem discussed on Neurostar.

Usually, the reference scan will be named SBRef as in the example below.

ls /mridata/cbu/CBU210700_MR21002/20211208_143530 Series001_64_Channel_Localizer/ Series002_CBU_MPRAGE_64chn/ Series003_bold_mbep2d_3mm_MB2_AP_2_SBRef/ Series004_bold_mbep2d_3mm_MB2_AP_2/ Series005_bold_mbep2d_3mm_MB2_AP_2_SBRef/ Series006_bold_mbep2d_3mm_MB2_AP_2/ Series007_bold_mbep2d_3mm_MB2_AP_2_SBRef/ Series008_bold_mbep2d_3mm_MB2_AP_2/ Series009_bold_mbep2d_3mm_MB2_AP_2_SBRef/ Series010_bold_mbep2d_3mm_MB2_AP_2/ Series011_bold_mbep2d_3mm_MB2_PA_2_SBRef/ Series012_bold_mbep2d_3mm_MB2_PA_2/ Series013_fieldmap_gre_3mm_mb/ Series014_fieldmap_gre_3mm_mb/

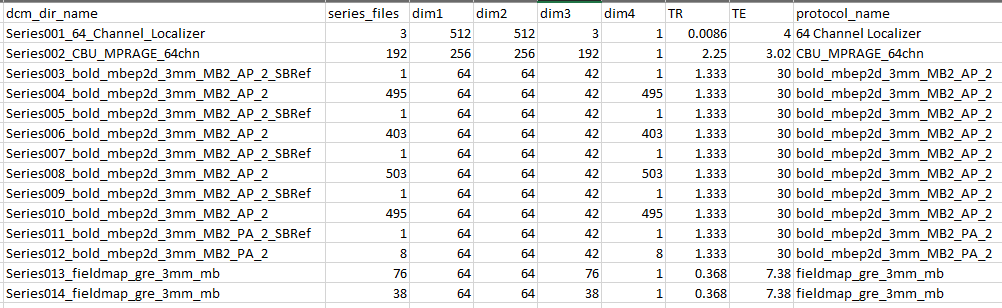

In this example, researchers acquired a T1w anatomical/structural scan (MPRAGE), four multi-band functional scans with single-band reference scans for each of them, an opposite phase encoding direction (PA) image to be used as a fieldmap, and two 'traditional' field maps. Below is the corresponding dicom_info table

The heuristic file for this example data is available on the GitHub repository.

Multi-echo data

BIDS standard requires that multi-echo data are split into one file per echo, using the echo-<index> entity. HeuDiConv will do this for you automatically! Therefore when specifying the template name in your heuristic file, you don't need to include the echo entity. It will be added for you.

Code examples

The example scripts described in this tutorial are available on our GitHub repository.

If you have any questions, please email Dace.